For years, artificial intelligence (AI) in banking worked like a helpful intern. It drafted emails, summarized reports, and answered customer questions. Useful, but not transformative.

Then came chatbots. These “digital assistants” promised better service but often delivered long answers to simple problems.

Now the model is shifting. A new wave of agentic AI moves beyond assistance to action. Instead of suggesting tasks, these systems can execute them: settling trades, checking compliance rules, or flagging suspicious transactions.

In short, banking is moving from AI that helps bankers to AI that acts like one.

What exactly is Agentic AI?

At its core, agentic AI refers to AI systems that can plan, decide, and execute tasks autonomously across multiple steps.

Unlike traditional AI tools that wait for prompts, an AI agent can:

According to a recent analysis by Microsoft’s financial services team, agentic systems allow banks to automate complex processes, from fraud checks to onboarding, because modern AI models can now perform multi-step reasoning and interact securely with internal application programming interfaces (APIs).

In banking, this means the system does more than interpret a prompt. It can run a process from start to finish. For example, it may review market data, check compliance rules, and execute a trade or settlement through connected systems.

Instead of waiting for a human to approve each step, the AI follows rules and completes the task automatically.

This ability to orchestrate tasks across systems is why many banks now describe agentic AI as “transactional AI.”

The AI does not just talk about financial actions; it carries them out.

Generative AI vs. Agentic AI: What is the difference?

Both generative AI and agentic AI rely on large language models (LLMs). The key difference lies in what they are built to do.

Most people today are familiar with generative AI tools like ChatGPT or Claude. These systems generate content: text, code, reports, and summaries. It helps staff interpret information but does not carry out actions.

But generative AI stops at the advice stage. Agentic AI goes further: it is designed to execute tasks. It can analyze data, make decisions within defined rules, and trigger actions across connected systems.

In simple terms, generative AI provides the map. Agentic AI drives the car.

Here is the difference in a banking context:

|

Feature |

Generative AI |

Agentic AI |

|

Core role |

Produces information |

Executes tasks |

|

Interaction style |

Prompt-response |

Autonomous workflow |

|

Example in banking |

Draft compliance report |

File compliance report automatically |

|

Example in trading |

Analyze a portfolio |

Execute and settle trades |

Is Agentic AI already running banks?

Institutions such as Goldman Sachs, Lloyds Banking Group, and Deutsche Bank are no longer just testing AI tools. Many are integrating agent-based systems into daily operations to automate parts of trading, compliance, and risk monitoring.

These systems are now being given limited authority to handle tasks that once required manual checks.

For example, Lloyds Banking Group has announced plans to scale agentic AI across the bank, expecting over £100 million in value from AI initiatives in 2026, double the value generated the previous year.

Meanwhile, Wall Street firms like Goldman Sachs are experimenting with AI agents for internal processes such as trade accounting, onboarding checks, and compliance workflows.

The goal is not to replace bankers outright, but to remove the slowest parts of the process.

Where Agentic AI is showing up first

Banks are cautious by nature. That means agentic AI is not being unleashed everywhere at once. Instead, the technology is appearing first in areas with structured rules and heavy data flows, including:

-

Anti-money laundering (AML)

Compliance teams must review thousands of alerts daily.

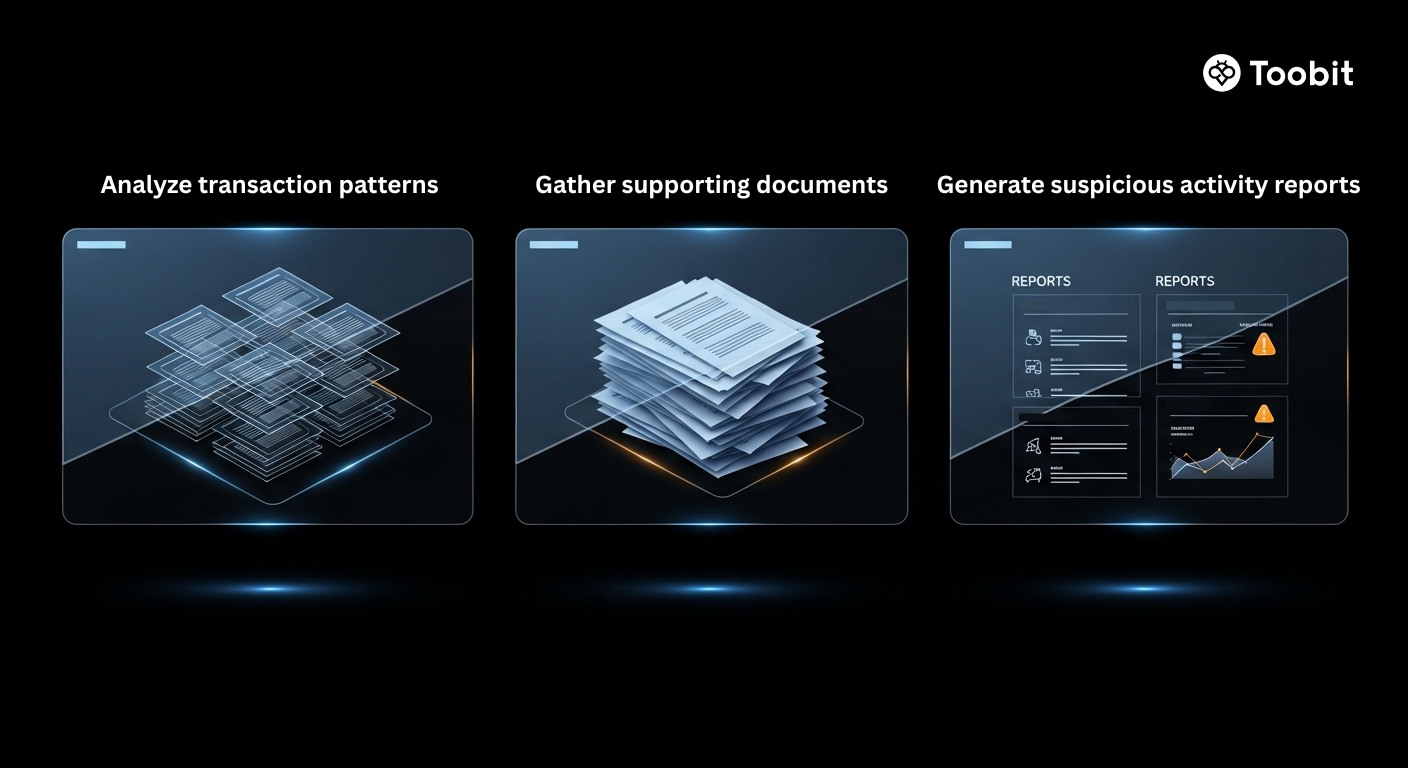

Agentic AI systems can:

Academic research has shown that agent-based AML systems can significantly speed up compliance workflows while improving narrative accuracy for regulators.

-

Customer onboarding and know your customer (KYC)

Opening a bank account requires identity verification, document checks, and fraud screening.

Agentic systems can run these steps in sequence automatically.

-

Trading operations

Back-office trade settlement, one of the most rule-heavy tasks in finance, is also becoming a prime candidate for automation.

Instead of staff reconciling trades manually, AI agents can verify records and update ledgers instantly.

But can banks trust autonomous AI?

Here is the uncomfortable truth: agentic AI introduces new risks.

Agentic AI systems can act on their own to complete tasks. If a system misreads market data or applies the wrong rule, it could trigger a chain of incorrect actions before a human steps in.

This risk is sometimes linked to what researchers call AI hallucinations, where a model produces incorrect conclusions from incomplete or misread data. In banking, such errors could affect trade execution, compliance checks, or transaction monitoring.

Experts warn about 3 key challenges:

Transparency

AI decisions must be explainable to regulators.

Bias in financial models

Training data could lead to unfair outcomes.

Autonomy creep

AI systems may start making decisions beyond their intended scope.

To manage this risk, regulators and global organizations are working on safeguards.

For instance, regulators in the European Union (EU) are already addressing these concerns through frameworks like the EU AI Act, which requires strict oversight for high-risk AI systems in finance.

In other words, banks may automate workflows but accountability still belongs to humans.

So… Are AI Agents taking over banking?

Not quite. The reality is more complex.

Agentic AI is rapidly becoming a digital workforce layer inside financial institutions. These systems can handle repetitive tasks faster and at scale.

But the core responsibilities like risk decisions, client relationships, strategic judgment, still require human oversight.

Even the most advanced AI deployments today operate with “human-in-the-loop” governance, meaning staff can intervene when needed.

The financial institutions of the future may not replace bankers with AI, but they will almost certainly expect bankers to work alongside thousands of digital agents.

And that raises an intriguing question for the industry:

If AI agents can already execute transactions, monitor compliance, and analyze markets, what exactly will the banker of the next decade do?

One thing is clear: the job description is about to change.